We show in a number of experiments that our method outperforms standard neural network baselines for multi-label classification. We build on recent work on end-to-end learning on graphs, introducing the Graph Search Neural Network as a way of efficiently incorporating large knowledge graphs into a vision classification pipeline. This paper investigates the use of structured prior knowledge in the form of knowledge graphs and shows that using this knowledge improves performance on image classification. Humans can learn about the characteristics of objects and the relationships that occur between them to learn a large variety of visual concepts, often with few examples. The More You Know: Using Knowledge Graphs for Image ClassificationĪbstract: One characteristic that sets humans apart from modern learning-based computer vision algorithms is the ability to acquire knowledge about the world and use that knowledge to reason about the visual world. In comparison to fine-tuning without a source domain, the proposed method can improve the classification accuracy by 2% - 10% using a single model. Such tasks include Caltech 256, MIT Indoor 67, and fine-grained classification problems (Oxford Flowers 102 and Stanford Dogs 120). Experiments demonstrate that our deep transfer learning scheme achieves state-of-the-art performance on multiple visual classification tasks with insufficient training data for deep learning. Specifically, we compute descriptors from linear or nonlinear filter bank responses on training images from both tasks, and use such descriptors to search for a desired subset of training samples for the source learning task. Our core idea is to identify and use a subset of training images from the original source learning task whose low-level characteristics are similar to those from the target learning task, and jointly fine-tune shared convolutional layers for both tasks. However, the source learning task does not use all existing training data. In this scheme, a target learning task with insufficient training data is carried out simultaneously with another source learning task with abundant training data.

In this paper, we introduce a deep transfer learning scheme, called selective joint fine-tuning, for improving the performance of deep learning tasks with insufficient training data. However, collecting and labeling so much data might be infeasible in many cases. Borrowing Treasures from the Wealthy: Deep Transfer Learning through Selective Joint Fine-TuningĪbstract: Deep neural networks require a large amount of labeled training data during supervised learning. Experimental results on several benchmark datasets are conducted to reveal the effectiveness of our algorithm over other state-of-the-arts. We formulate the above concerns into a unified optimization framework. Meanwhile, a consistency term is employed to make these complementary representations to further have a common indicator. In this paper, we propose a novel multi-view subspace clustering model that attempts to harness the complementary information between different representations by introducing a novel position-aware exclusivity term.

To boost the performance of multi-view clustering, numerous subspace learning algorithms have been developed in recent years, but with rare exploitation of the representation complementarity between different views as well as the indicator consistency among the representations, let alone considering them simultaneously. Exclusivity-Consistency Regularized Multi-view Subspace ClusteringĪbstract: Multi-view subspace clustering aims to partition a set of multi-source data into their underlying groups. It includes an easy-to-use UV coordinate editor, a standard set of UV mapping projections such as planar, box, cylindrical, and spherical, as well as advanced UV mapping projections such as face UV mapping, camera UV mapping, and unwrap UV faces for those difficult to map areas.1.

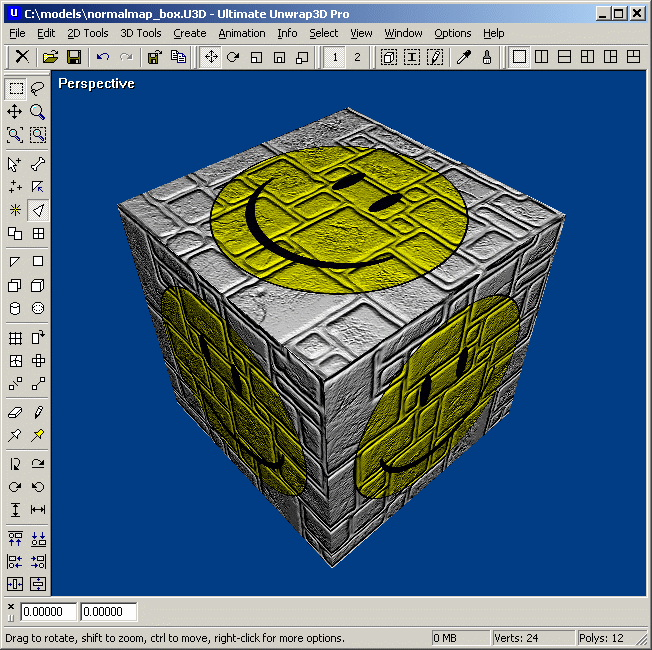

ULTIMATE UNWRAP 3D CRA WINDOWS

Ultimate Unwrap 3D is a specialty Windows UV mapping tool for unfolding and unwrapping 3D models. It includes an easy-to-use UV coordinate editor, a standard set of UV mapping projections such as planar, box, cylindrical, and spherical, as well as advanced UV mapping projections such as face UV mapping, camera UV mapping, and unwrap UV faces.

ULTIMATE UNWRAP 3D CRA SERIAL NUMBER

3D rendering AIO Avanquest Software collection post cross-platform dream home dream house ECloZion foolproof home design home improvement house design interior decoration interior design landscape design Serial Number series.

ULTIMATE UNWRAP 3D CRA CRACKED

Ultimate Unwrap 3d Pro Serial Number 8,7/10 7720 reviewsĭownload easyworship 2009 cracked file.